Digital and user experience management has been the focus of multiple EMA research studies throughout the years, both as a stand-alone topic and as part of EMA’s ongoing examination of critical trends such as digital and operational transformation, IT performance optimization, and of course application performance management (APM). In many respects, optimizing the digital experience [...]

Increased Focus on Digital Experience Management Prompts New Research- Done Jointly by Dennis Drogseth and Julie Craig

By Dennis Drogseth on Aug 26, 2016 9:11:12 AM

Windows 10…One Year Later

By Steve Brasen on Aug 18, 2016 1:58:29 PM

Time flies when you’re upgrading operating systems. It has officially been a year since Microsoft introduced Windows 10 to much fanfare and approbation. Acceptance of the platform was almost immediate, with many users simply grateful to migrate away from the much-maligned Windows 8 environment. At the core of the problems with the previous edition of Microsoft’s flagship OS was that the GUI was designed to function more effectively on a tablet than on a PC, which infuriated users who had grown used to the Windows 7 look and feel on their laptops and desktops. The release of Windows 10 gave Microsoft’s core audience exactly what it wanted—a unified code base that enables the same applications to be employed on all device architectures (desktops, laptops, tablets, and smartphones) while retaining the look and feel of the classic Windows 7 desktop that they had come to appreciate.

Linux on Power—Poised for Greatness

By Steve Brasen on Apr 22, 2016 11:12:45 AM

For two decades, IBM’s Power Systems family of high-performance servers has been considered the premier alternative to x86-based systems. Combining fast processing, high availability, and rapid scalability, Power Systems are optimized to support big data and cloud architectures. Popularly deployed to run IBM’s AIX and IBM i operating systems, the platform has seen stiff competition in recent years from x86-based Linux systems. In 2013, IBM responded to this challenge by investing a billion dollars into the development of enhancements to the Power line that would support Linux operating systems and open source technologies. This bold move was hailed as a strategy that would greatly improve the attractiveness of the platform and drive broader adoption.

2016: Looking Ahead at ITSM—Want to Place Any Bets?

By Dennis Drogseth on Jan 22, 2016 12:52:29 PM

I thought I’d begin the year by making some predictions about what to look for in 2016 in the area of IT service management (ITSM). For those of you who have been following my blogs with any regularity, and particularly for those who sat in on our webinar for the research report “What Is the [...]

Why Analytics and Automation Are Central to ITSM Transformation

By Dennis Drogseth on Nov 9, 2015 11:12:00 AM

In research done earlier this year, we looked at changing patterns of IT service management (ITSM) adoption across a population of 270 respondents in North America and Europe. One of the standout themes that emerged from our findings was the need for the service desk to become a more automated and analytically empowered center of [...]

Digital Transformation and the New War Room

By Dennis Drogseth on Nov 3, 2015 10:42:46 AM

In August EMA surveyed 306 respondents in North America, England, France, Germany, Australia, China and India about digital and IT transformation. The goal was in part to create a heat map around just what digital and IT transformation were in the minds of both IT and business stakeholders. We targeted mostly leadership roles, but also [...]

User Experience Matters in Self-Service Provisioning

By Steve Brasen on Oct 26, 2015 12:08:22 PM

If you’re like me, you are increasingly becoming reliant on online shopping to replace the more arduous task of physical in-store shopping. I find this is particularly true during the holiday season when the idea of fighting traffic and elbowing crowds to desperately search numerous shops in order to find just the right gift for Aunt Phillis (who’s just going to hate whatever she receives anyway) gives way to the more idyllic setting of web-surfing multiple stores simultaneously from the privacy of your home while the dulcet tones of Nat King Cole playing gently in the background lull you into the holiday spirit (a little spiced eggnog on the side doesn’t hurt either). But have you ever stopped to consider why you shop at some websites and not at others? Certainly item prices have something to do with it, as does the breadth of product selection. However, there is almost certainly a third element involved—one of which you may not even be consciously aware: The quality of the online store shopping experience directly impacts the likelihood that you (and other consumers) will purchase items on it. Websites that are friendly, professional, and easy to use are far more likely to produce sales than those that are confusing and difficult to navigate.

Top 5 Reasons IT Administrators Are Working Too Hard Managing Endpoints

By Steve Brasen on Oct 9, 2015 10:25:18 AM

IT administration is a thankless job. Let’s face it—the only time admins gain any recognition is when something goes wrong. In fact, the most successful IT administrators proactively manage very stable environments where very few failures and performance degradations occur. Unfortunately, though, this is rarely the case, and it is far more common for admins to get stuck in the break/fix cycle of reactive “firefighting” where problems are never truly resolved and are destined to occur again. Making matters worse, increasing requirements for mobility, business agility, high performance, and high availability have substantially increased IT administrator workloads. With this kind of pressure, it’s no wonder IT professionals are frustrated.

Running Containers Doesn’t Have To Mean Running Blind

By Dan Twing on Oct 6, 2015 2:17:15 PM

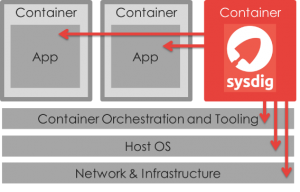

The idea of containers has been around for a long time in various forms on various operating systems. It has been part of the Linux kernel since version 2.6.24 was released in 2008. However, containers did not become mainstream until a couple years ago when Docker was first released in March 2013. Docker introduced container management tools and a packaging format, which made container technologies accessible to developers without Linux kernel expertise. By doing so Docker led the way to making containers mainstream as well as one of the hottest trends in application development and deployment because it simplified the way applications are packaged. While this has big advantages, containers are still early in their lifecycle and lack operational maturity. The ease of use with which Docker images can be created leads to image sprawl, previously seen with VMs, and exacerbates the problem of managing security and compliance of these images. Container environments do not integrate well to existing developer tools, complicating team development due to a lack of staging and versioning for preproduction and production promotion. Also, containers do not integrate with existing monitoring tools, complicating management. However, new tools are being developed targeting Docker as an application delivery format and execution environment by an ever-growing Docker community. Many of the benefits are on the development side of the house, with the promise of DevOps benefits. Running in production can be a different story.

Are Laptops Really Mobile Devices?

By Steve Brasen on Sep 25, 2015 10:39:16 AM

When people think of IT mobility, the images most immediately conjured regard smartphones and tablets. In truth, however the mobile device landscape could be considered broader than this. The basic definition of a mobile device is simply “any computing device designed principally for portability.” By that definition, laptops should clearly be included in that scope. However, some definitions state that a mobile device must be “handheld” indicating size is a factor without actually specifying how small a device must be to achieve that designation. Regardless of size limitations, those definitions still favor inclusion of laptops since many are available with a form facture that is smaller than some of the larger tablets. Therefore, the defining descriptor for a mobile device must fall to its portability, which also happens to be the key differentiator between a laptop and a desktop PC. Logically, therefor, a laptop is, in fact, a mobile device.