The ultimate goal of the Software-Defined Datacenter (SDD), a term coined only a few months ago by VMware’s Steve Herrod, is to centrally control all aspects of the data center – compute, networking, storage – through hardware-independent management and virtualization software. This software will also provide the advanced features that currently constitute the main differentiators for most hardware vendors. The following quote by Steve Herrord succinctly sums up the bad news that VMware is delivering to many of these vendors: “If you’re a company building very specialized hardware … you’re probably not going to love this message.”

VMware’s Search for a new Differentiator

VMware’s core business, the hypervisor, is in the process of being commoditized and threatened from more than one side:

a) The Big 4 – CA Technologies, HP, BMC, and IBM – all offer their own virtualization management solutions, encouraging the use of low-cost or free hypervisors, such as KVM. It's only natural that to maximize their own profitability, the Big 4 want customers to spend as little as possible on hypervisor technology and as much as possible on management software and services.

b) Cloud platform (CMP) vendors, whether they are based on OpenStack, CloudStack, or their own proprietary technologies are also not interested in customers spending their project funds on VMware’s hypervisor technologies.

This commoditization threat to its own core business model is driving VMware to aggressively expand its value proposition. The acquisition of DynamicOps, but even more the $1.26 billion paid for Nicira are clear evidence of VMware’s urgency in the search for new differentiators. Commoditizing more and more data center hardware will not be popular with numerous of VMware’s partners, however, this is a price that the company must pay if it intends to remain the virtualization leader.

Business Logic Driven Automation

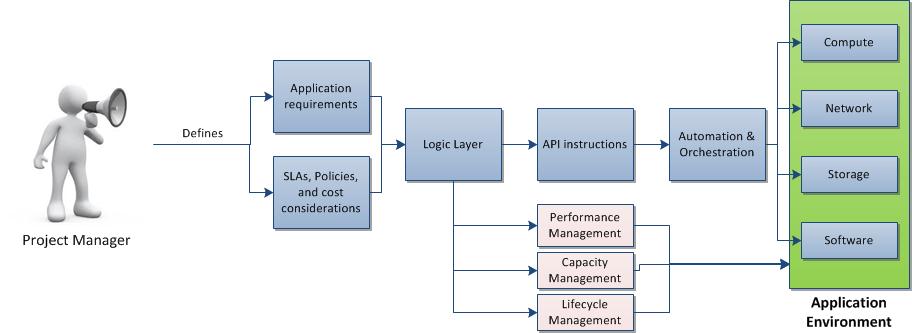

The SDD constitutes a grand vision of what enterprise IT would have to look like to optimally serve the business. The key idea behind this ideal state is to allow the application to define its own resource requirements – compute, network, storage, software – based on corporate SLA and compliance policies. In order to ensure the scalability, flexibility, and agility that will ultimately translate into a significantly reduced OPEX, it is essential to note that these requirement definitions are based on business logic, instead of technical provisioning instructions. These business logic elements are then translated into a set of API instructions that enable the management and virtualization software to provision, configure, move, manage, and retire the resources relevant to the business service. In short, the SDD transforms the traditionally infrastructure-centric data center, with its focus on ensuring the proper operation of compute, network, and storage elements, into an application or business service focused environment. This transformation constitutes a radical paradigm shift, changing the role of IT staff from reactive service providers to proactive change agents ensuring capacities for future workloads. The SDD purely revolves around application workload demands, allowing business users to deploy and run their applications in the most efficient and SLA compliant manner.

By definition, the SDD is future-proof, as it relies on requirement definitions that are abstracted from the actual data center automation and orchestration layer. There is no army of clever coders at the heart of the SDD, who simply tweak the automation and orchestration tool each time a new application workload, SLA, or corporate policy comes along. The SDD has to be able to “autonomously” provide the type of application environment that ensures the reliability, performance, and security required by the business users (see Figure 1).

In order to ensure scalability, elasticity, and cost efficiency, the SDD will run on commodity hardware. All automation and management tasks are unbundled from storage, network, and compute components and provided as a central software solution. vSphere and vCenter have shown how to effectively achieve this goal for major parts of the compute layer, commoditizing today’s servers to a significant degree. Performance, capacity, and lifecycle management, formerly highly complex datacenter disciplines will be inherent in the DNA of the SDD in its ultimate state.

Eventually, the SDD should resolve the ongoing antagonism between IT operations and business staff, as the former will be responsible for creating sufficient capacities within the SDD, whereas the latter will set the business rules that enable the SDD to accommodate workloads in a SLA compliant manner. The desired application environment will be automatically provisioned and workloads are placed based on SLA, policy, and cost efficiency considerations.

The Software Defined Datacenter vs. Private Cloud

Google and Amazon are generally envied for their incredibly low OPEX per server and for their extreme scalability. However, we must not forget that simply creating a Google or Amazon-like private cloud is not the answer for most organizations, as there are many existing workloads that will not run on this type of highly standardized environment. Organizations often do not even have the source code available to recompile certain legacy applications to run in the cloud. In contrast, the SDD – with some input by the project manager – will be able to determine a specific legacy application’s requirements from a technical and SLA perspective and create the appropriate runtime environment that simply emulates the non-virtualized legacy infrastructure (again, we are talking about a vision), where the application used to run on for years or even decades. To take advantage of Amazon’s or Google’s economies of scale, the SDD can place certain workloads to the public cloud, as long as there is a sufficiently granular and rigid SLA available.

Next week, we will discuss the three core components of the SDD: Software-Defined Networking, Storage Hypervisor, and Server Virtualization.

Further reading material:

Interop and the Software-Defined Datacenter (Steve Herrod, May 8, 2012)

GIGAOM - VMware: 'The software-defined data center is coming' (Derrick Harris, May 9,2012)

VMware and Nicira - Advancing the Software-Defined Datacenter (Steve Herrod, July 23, 2012)