(You can read more about the content of the EMA Top 3 - Enterprise Decision Guide in my first Post)

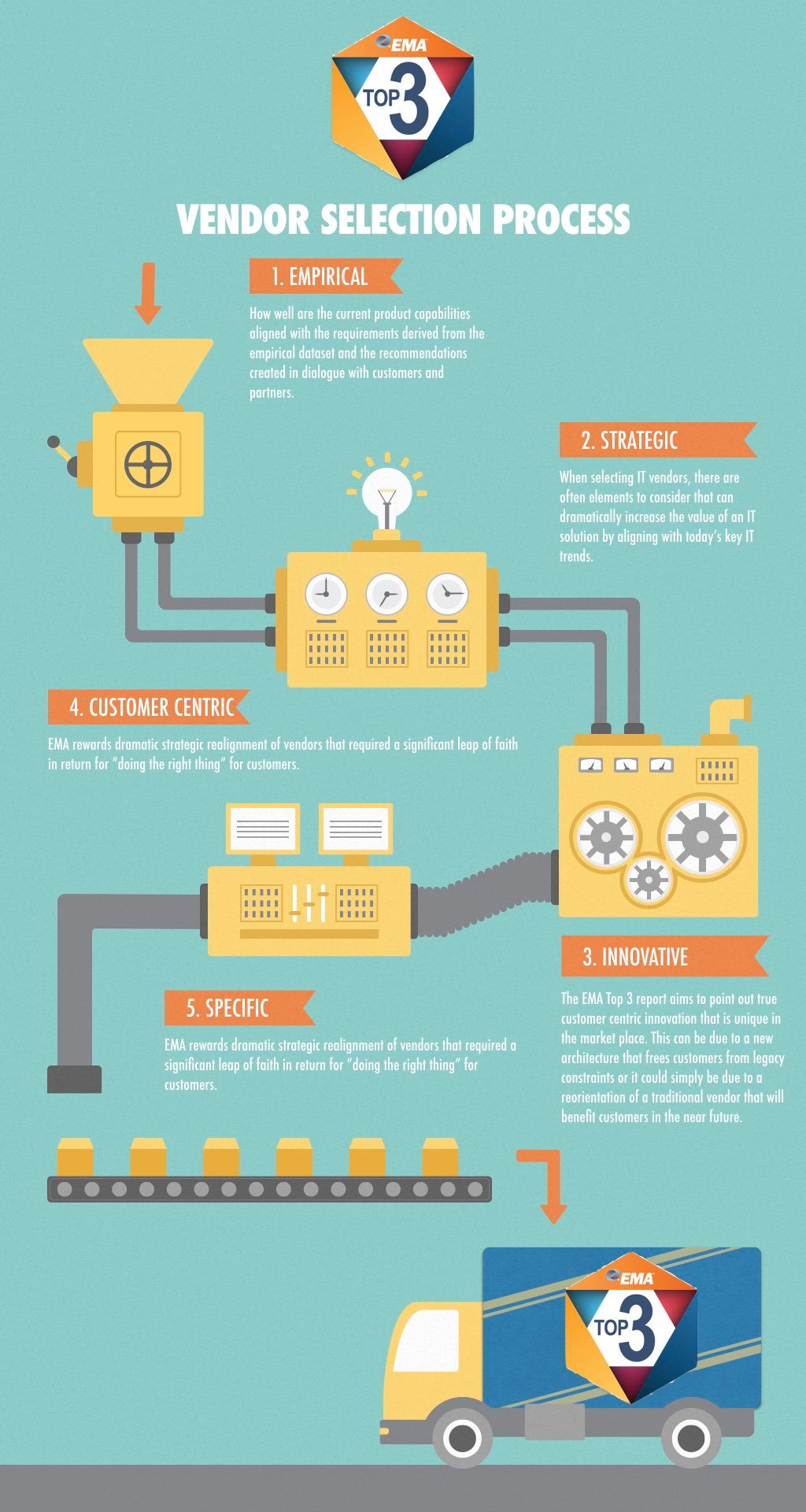

EMA Top 3 Product Selection Process

By Torsten Volk on Jun 16, 2017 5:14:24 PM

The EMA Top 3 Report: Ten Priorities for Hybrid Cloud, Containers, and DevOps in 2017

By Torsten Volk on Jun 15, 2017 4:34:31 PM

Dell EMC World – The Easy Button for Digital Transformation

By Torsten Volk on May 15, 2017 8:44:12 AM

“It feels like magic but it is technology,” and “we will help transform every company into a software company,” were the two quotes by Michael Dell that best summed up the spirit of Dell EMC World 2017. These statements show the genuine excitement of a seasoned tech executive to attack the next challenge of his career: merging the Dell EMC brands -Pivotal, VMware, RSA, SecureWorks, and Virtustream- into one highly differentiated IT powerhouse.

New EMA Research: 68% of Enterprises are Evaluating Containers TODAY

By Torsten Volk on Apr 27, 2017 12:59:03 PM

EMA's latest research shows that 68% of enterprises are in the process of evaluating container technologies. Why is everyone today so fascinated by containers? It reminds me of the OpenStack-mania in 2013. At the time I was convinced that VMware had set out to crush the hype, while IBM, Rackspace and a ton of VC funded startups oversold OpenStack to the highest degree. I still have my collection of USB sticks with OpenStack distributions from Piston, Mirantis and friends. Claiming that all I had to do was plugging these sticks into any piece of metal and I'd have Amazon EC2 running right under my desk was not a great idea and ultimately lead to a degree of frustration that made the Microsoft and VMware tax look attractive and ultimately turned Amazon Web Services into a $4.5 billion business.

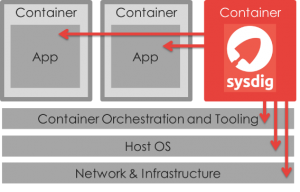

Full Stack Container Monitoring – A Whole New Animal

By Torsten Volk on Apr 19, 2017 11:52:20 PM

Traditional IT infrastructure monitoring focuses on hypervisor hosts, storage and VMs. Application impact is tracked through vSphere resource tagging. This simple and mostly static drilldown approach to full-stack monitoring no longer works for modern microservices based computing.

InterConnect 2017 – Showing off a whole New IBM

By Torsten Volk on Mar 24, 2017 10:31:12 AM

"Today, a dev team leveraging Kubernetes containers can get a cloud app up in minutes." This statement by Arvind Krishna, IBM’s GM for Hybrid Cloud, at the beginning of his InterConnect 2017 keynote should have received a lot more recognition than it did. This one sentence shows the fundamental shift in IBM’s strategy, away from the old Tivoli-centric IT ops company and toward a modern DevOps-focused organization that is looking for differentiation up the stack. Today’s IBM encourages developers to deploy entire application environments without IT administrators even being aware.

Running Containers Doesn’t Have To Mean Running Blind

By Dan Twing on Oct 6, 2015 2:17:15 PM

The idea of containers has been around for a long time in various forms on various operating systems. It has been part of the Linux kernel since version 2.6.24 was released in 2008. However, containers did not become mainstream until a couple years ago when Docker was first released in March 2013. Docker introduced container management tools and a packaging format, which made container technologies accessible to developers without Linux kernel expertise. By doing so Docker led the way to making containers mainstream as well as one of the hottest trends in application development and deployment because it simplified the way applications are packaged. While this has big advantages, containers are still early in their lifecycle and lack operational maturity. The ease of use with which Docker images can be created leads to image sprawl, previously seen with VMs, and exacerbates the problem of managing security and compliance of these images. Container environments do not integrate well to existing developer tools, complicating team development due to a lack of staging and versioning for preproduction and production promotion. Also, containers do not integrate with existing monitoring tools, complicating management. However, new tools are being developed targeting Docker as an application delivery format and execution environment by an ever-growing Docker community. Many of the benefits are on the development side of the house, with the promise of DevOps benefits. Running in production can be a different story.